Set

custom_headers=true in your request parameters to enable custom header functionality while maintaining ZenRows’ automatic header optimization.How custom headers work

When you make a request to a website, your browser automatically includes dozens of HTTP headers that provide information about the request context, browser capabilities, and user preferences. These headers help servers understand:- What type of content to return (HTML, JSON, XML)

- Where the request originated from (referrer information)

- What the browser can handle (encoding, language preferences)

- Authentication credentials (cookies, tokens)

- User preferences (language, timezone)

Enabling custom headers

To use custom headers, set thecustom_headers parameter to true in your API request. This enables your custom headers while ZenRows continues to manage sensitive browser-specific ones automatically.

Basic custom header usage

Common header use cases

Referrer simulation

Control where the request appears to originate from by setting theReferer header.

Python

- Access content that’s restricted to specific traffic sources

- Bypass anti-bots that allow access from search engines

- Receive personalized content based on traffic source

- Avoid bot detection that checks for missing referrers

Session management with cookies

Maintain authentication state across requests by including session cookies in theCookie header.

Python

Language, localization and currency

Request content in specific languages or regions using website-specific cookies. ZenRows automatically manages theAccept-Language header, but some websites use specific cookies to control currency, language, or localization settings.

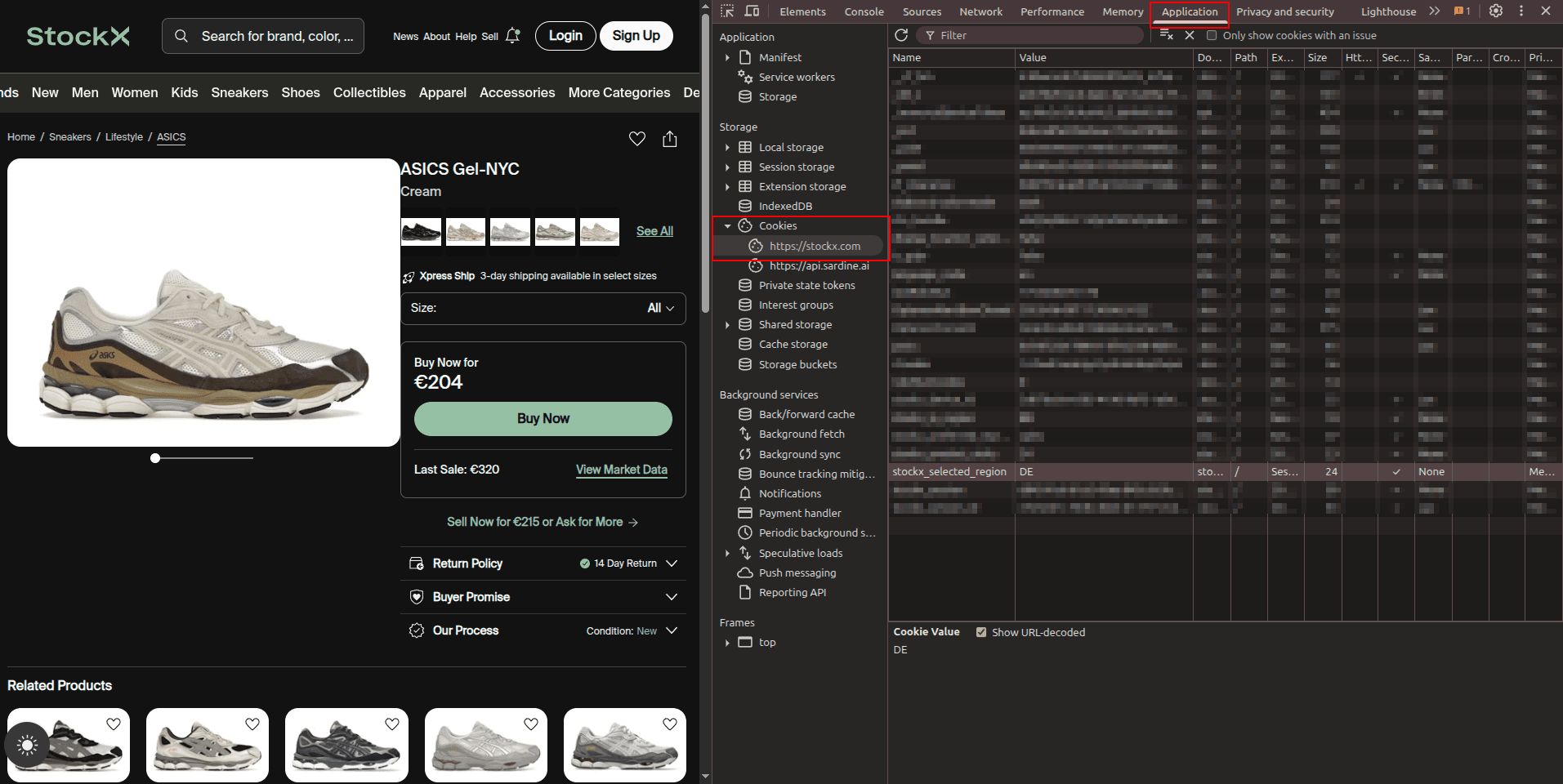

Consider the website stockx.com, which shows different currencies based on the stockx_selected_region cookie. Here’s how to find and use such cookies:

Find localization cookies in DevTools

- Open the target website in an incognito browser tab

- Open DevTools and go to the Application tab

- Navigate to Cookies and select the website URL

- Look for cookies that indicate a relation to language, localization, or currency

- Test by changing the cookie value in DevTools and refreshing the page

Best practices

Start with minimal headers

Only add custom headers when necessary. Unnecessary headers can increase detection risk, especially with high-volume scraping. Start with no custom headers and add them only when you encounter specific blocking or need particular functionality.Analyze target behavior first

Before adding custom headers, check what the target website expects by examining real browser requests:Research workflow:

- Open the target URL in an incognito/private browser tab

- Open DevTools (

F12) and go to the Network tab - Reload the page and examine the request headers

- For cookie-based functionality, check the Application tab in DevTools

- Only include headers that are essential for your use case

Header rotation for multiple requests

Vary headers across requests to avoid creating detectable patterns. Websites can detect if all requests come from the same referrer and may start blocking those requests. Rotating referrers helps simulate more natural browsing behavior from different traffic sources.Python

Troubleshooting

Common header issues and solutions

| Issue | Cause | Solution |

|---|---|---|

| Headers ignored | custom_headers=true not set | Add custom_headers=true to request parameters |

| Authentication fails | Incorrect cookie format | Format cookies as proper cookie string: key1=value1; key2=value2 |

| 403 Forbidden errors | Missing required headers | Check target website’s requirements and include necessary headers |

| Localization not working | Wrong cookie format or value | Use DevTools to find correct cookie names and values |

| Session expired quickly | IP mismatch in cookies | Use session_id or obtain cookies through ZenRows and reuse them |

| Headers not taking effect | Conflicting header values | Ensure headers are consistent and don’t contradict each other |

| Website rate limiting triggered | Same headers for all requests | Rotate headers across requests to simulate natural browsing |

Debugging header problems

When headers aren’t working as expected:Check for referrer-based access control

Some websites only allow access to specific pages if you first visit another page. This is because the initial page sets cookies necessary for subsequent pages.Example: Amazon product page accessed from search resultsAlternatively, use

Python

https://www.google.com as a referrer to simulate traffic from search engines.Pricing

Thecustom_headers parameter doesn’t increase the request cost. The only features who increase request costs are the Premium Proxy and JavaScript Render.

Frequently Asked Questions (FAQ)

What are custom headers and why would I use them?

What are custom headers and why would I use them?

Custom headers allow you to modify HTTP request headers like

Referer, Accept, Cookie, or Authorization to control how the target server perceives your request. This is useful for handling authentication, simulating specific browser behaviors, requesting particular content types, bypassing certain restrictions, and maintaining session continuity across requests.Which headers are automatically managed by ZenRows?

Which headers are automatically managed by ZenRows?

ZenRows automatically manages browser-environment headers, including

User-Agent, Accept-Encoding, Sec-Ch-Ua, Sec-Fetch-Mode, Sec-Fetch-Site, Sec-Fetch-User, and other browser fingerprinting headers. These are optimized for high success rates and anti-bot protection, and cannot be customized to maintain consistency and reliability.Why can't I override certain headers like User-Agent?

Why can't I override certain headers like User-Agent?

Headers like

User-Agent, Sec-Ch-Ua, and Accept-Encoding are closely tied to browser behavior and fingerprinting. ZenRows manages these automatically to ensure they match the browser environment being simulated, preventing detection by anti-bot systems that check for inconsistencies between headers and actual browser capabilities.What happens if I don't set custom_headers=true?

What happens if I don't set custom_headers=true?

Without

custom_headers=true, any custom headers you include in your request will be ignored. ZenRows will use only its automatically managed headers. You must explicitly enable custom headers to have your additional headers included in the request to the target website.Can I use custom headers with JavaScript rendering?

Can I use custom headers with JavaScript rendering?

Yes, custom headers work with both static requests and JavaScript rendering (

js_render=true). The headers are applied to the initial page request and any subsequent requests made during JavaScript execution, providing consistent header behavior throughout the rendering process.What should I do if my custom headers aren't working?

What should I do if my custom headers aren't working?

First, verify that

custom_headers=true is set in your parameters. Then check that your header format is correct and matches what the target website expects. Use debugging tools like httpbin.io/anything to verify that your headers are being sent correctly. Compare your headers with those sent by a real browser using developer tools, and ensure you’re not attempting to set headers that ZenRows manages automatically.Can I rotate headers across multiple requests?

Can I rotate headers across multiple requests?

Yes, you can vary headers across requests to avoid creating detectable patterns. Generate different combinations of referrers, language preferences, and other allowed headers for each request. This helps simulate more natural browsing behavior and can improve success rates when scraping multiple pages or making repeated requests to the same site.